Reproducing GPT-2

Published:

🧠💬 ChatGPT has become an integral part of my daily life. I became increasingly intrigued to understand what was going on under the hood of the GPT model and spent my weekends 🛠️ building a GPT model — aiming to replicate OpenAI’s GPT-2 (124M) model.

🎓📚 Andrej Karpathy’s lecture series is hands-down the best resource to learn about Deep Learning and LLMs.

🚀 After a lot of tinkering, I finally completed building my GPT model and began training it on a piece of internet data. This whole journey made me appreciate even more the incredible ingenuity and exceptional engineering that goes into OpenAI’s models. 🙌

🤖 I’m eagerly awaiting the launch of OpenAI’s open-source reasoning model, and now that I’ve got a better grasp of how LLMs work, I’m super excited to start applying them in my projects! 🔍⚙️

📂 GitHub Repository: nano GPT-2

🎥 Neural Networks: Zero to Hero by Andrej Karpathy: Lecture Series

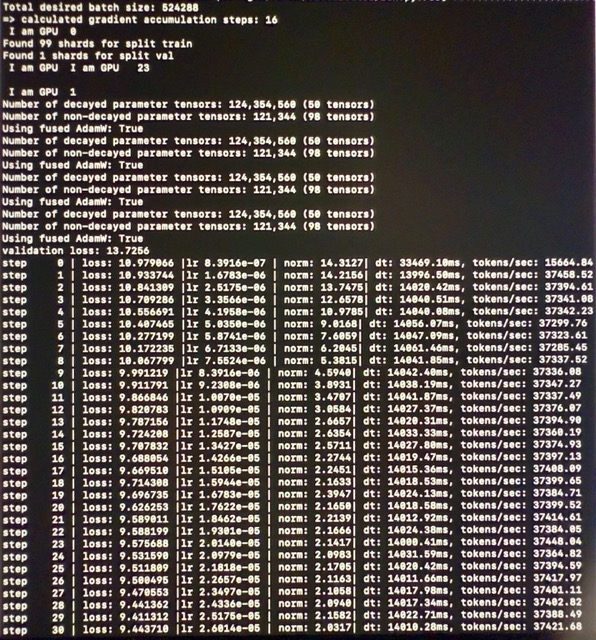

🧪 Finally training my model: